The Atari 2600 had 128 bytes of RAM.

Not 128 kilobytes. Not 128 megabytes. One hundred and twenty-eight individual bytes — roughly the length of two average tweets. That’s the entire working memory a developer had to build Pitfall!, Adventure, and Space Invaders. To put it another way: the phone in your pocket has more RAM than the Atari 2600 by a factor of roughly eight billion.

So how did they do it?

The answer involves some of the cleverest, most audacious programming tricks in computing history. Early game developers weren’t just writing code — they were performing a kind of technical acrobatics, exploiting timing quirks, bending hardware to do things it wasn’t designed for, and turning limitations into features. Some of those tricks still echo through game development today.

What Developers Were Actually Working With

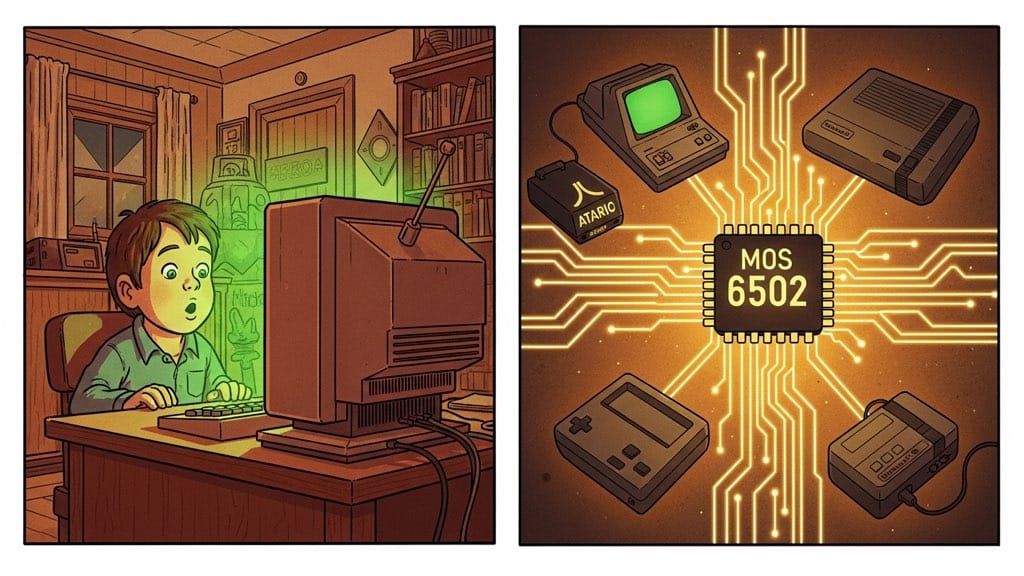

Last time, we looked at the MOS 6502, the $25 chip at the heart of the Apple II, Commodore 64, NES, and Atari 2600. But the chip alone tells only part of the story. The machines built around it varied wildly in what they offered developers.

The Atari 2600, released in 1977, was the most extreme case. Its 6507 CPU (a 6502 variant) ran at 1.19 MHz. It had 128 bytes of RAM. Its graphics chip, called the TIA (Television Interface Adaptor), had no framebuffer where you could draw a picture and then hand it to the display. Instead, the TIA generated the TV signal in real time, one horizontal line at a time, drawing whatever the CPU told it to draw right now.

The NES, released in Japan in 1983, was comparatively luxurious: 2 kilobytes of RAM, a dedicated graphics processor, and hardware support for scrolling backgrounds. But it still had strict limits on how many sprites could appear on a single horizontal line — exceed that number, and sprites would flicker or vanish.

The arcade machines that ran Pac-Man (1980) and Space Invaders (1978) had more muscle than home consoles, but they were still running on a handful of kilobytes and processors measured in megahertz, not gigahertz.

These weren’t setbacks to be engineered around. They were the entire playing field.

Racing the Beam

Of all the techniques early developers invented, none is stranger — or more impressive — than what Atari 2600 programmers called “racing the beam.”

Here’s the problem they faced. A standard 1970s television draws its picture by firing an electron beam across the screen, left to right, top to bottom, line by line. It does this about 60 times per second (in North America). Each horizontal scan line takes roughly 76 CPU cycles to draw. At the end of each line, there’s a brief moment — called the horizontal blank — where the beam is repositioning itself. At the end of the entire frame, there’s a longer vertical blank while the beam resets to the top.

Because the TIA had no framebuffer, the CPU had to tell it what to draw during each scan line, while that line was being drawn. If the CPU was a few cycles late, the TIA would draw the wrong thing at the wrong position. A sprite would shift sideways. A background color would bleed into the wrong part of the screen.

This meant Atari developers had to write code that was cycle-perfect. They knew exactly how many CPU cycles each instruction took, and they counted them out like a musician counting beats, making sure the right values were written to the right hardware registers at precisely the right clock cycle.

You weren’t just writing a program. You were writing a program that had to execute in lockstep with a physical electron beam scanning across a glass screen. If you’ve ever wrestled with timing-sensitive code on a Raspberry Pi or microcontroller, you’ll have a faint sense of what this felt like — except those environments forgive a missed microsecond. The TIA did not.

The analogy that comes to mind is writing a piece of music where every note has to land on an exact millisecond — not approximately, but exactly — or the song falls apart. Except the “song” is a video game, the “millisecond” is a single CPU clock cycle, and you’re writing in assembly language with no debugger and 128 bytes of storage.

Games like Pitfall! (1982) and Yars’ Revenge (1982) used this technique to produce visuals that seemed impossible given the hardware. Developers would reprogram the TIA mid-scanline to change colors or reposition sprites, effectively creating different “zones” within a single frame. It was less like programming a computer and more like conducting a very fast, very precise machine.

The Accidental Speed-Up: Space Invaders

Not all classic game tricks were planned. Some were discoveries made while staring at code that behaved strangely.

Space Invaders (1978) is one of the most famous examples of a bug that became a feature.

The game’s premise is simple: rows of aliens march back and forth across the screen, descending toward you. You shoot them. If they reach the bottom, you lose. But players noticed something: the aliens moved faster as you killed them. By the time only a handful remained, they were crossing the screen at a frantic pace. It turned out this was unintentional — and it transformed the game.

Here’s why it happened. The game processed each alien in the grid every frame, even if that alien was dead. As the grid filled with dead aliens, the CPU had fewer enemies to process — fewer sprite positions to update, fewer collision checks to run. The game loop ran faster simply because there was less work to do, and since the alien movement was tied directly to the game loop, fewer aliens meant faster movement.

The developer could have fixed it. They chose to keep it — and to add a single red alien in the final wave that made the difficulty spike even more. The unplanned acceleration created a crescendo of tension. As you cleared the board, victory felt increasingly within reach, and then suddenly the last few aliens were sprinting at you.

The lesson game designers learned: sometimes emergent behavior from hardware constraints is better than whatever you planned.

Four Ghosts, Four Personalities: Pac-Man

Pac-Man (1980) is one of the most analyzed games in history, and for good reason — it managed to create four enemies who feel behaviorally distinct, even though each one is governed by just a few lines of logic.

The four ghosts (Blinky, Pinky, Inky, and Clyde) each follow a different targeting rule. Blinky (red) always moves toward Pac-Man’s current position. Pinky (pink) targets a spot four tiles ahead of Pac-Man’s current direction, trying to ambush from the front. Inky (cyan) uses a combination of Blinky’s position and Pac-Man’s position to pick a target, which produces erratic flanking behavior. Clyde (orange) heads toward Pac-Man when far away, but retreats to his corner when close — which makes him unpredictable in a different way.

None of these behaviors required complex AI like you might see in chess-playing programs. They required four different target-picking rules, each simple enough to compute in a few CPU instructions. But the combination of all four ghosts moving simultaneously, each following its own logic, creates a dynamic that feels emergent and alive.

The maze wasn’t just decoration, either. It was designed specifically to interact with the ghosts’ targeting algorithms, creating predictable “safe spots” and choke points. The level design and the AI were co-designed. This also explains why players who learned the ghost patterns could achieve high scores that seemed superhuman.

The game ran on a Zilog Z80 processor clocked at 3.072 MHz with about 16 kilobytes of ROM. Every ghost personality, every animation, every sound effect, the entire game fit in a space that is a fraction of the size of a typical email attachment today.

Scrolling a World That Doesn’t Fit: Super Mario Bros.

By 1985, when Nintendo released Super Mario Bros. for the NES, home console hardware had improved considerably over the Atari 2600. But Mario presented a new kind of challenge: a game world far larger than the screen.

The NES had 2 kilobytes of RAM and a graphics chip (the PPU, or Picture Processing Unit) that could display a 256×240 pixel image. A single NES “nametable” — the data structure that defined a background screen — was 1,024 bytes. Mario’s levels were many screens wide. How do you scroll a large world when you can barely fit one screen’s worth of data in memory?

The answer was a technique called “column-by-column loading.” Rather than storing the entire level in RAM, the game kept only what was visible (plus a small buffer for the right edge). As the player scrolled right, the PPU was told to draw the next column of tiles from the game’s ROM cartridge, overwriting the column that had just scrolled off the left side of the screen. The player never sees this because by the time a column is reused, it’s far off-screen.

The scroll position was tracked with a register called the scroll register. This told the PPU where to begin reading the nametable — effectively a “window” sliding across the background data. The edges of the visible window wrapped around seamlessly because the two nametables were arranged side by side, and the PPU treated them as one continuous surface.

This was elegant, but it required careful management of the PPU’s state during the vertical blank period — the brief interval between frames when the electron beam was off-screen. Everything that needed to change on screen had to change during that window. If updates ran long, visual artifacts would bleed onto the display.

Super Mario Bros. shipped in 40 kilobytes of ROM. The entire game — eight worlds, four levels each, full physics simulation, enemy AI, sound effects, and music — fit in 40 kilobytes.

Why Constraints Produce Genius

There’s a theory about creative work that says constraints focus the mind. When you can do anything, you often do nothing particularly well. When you can do almost nothing, you are forced to find the one clever solution that actually works.

Early game developers had no choice but to find those solutions. They couldn’t brute-force problems with memory or processing power — there wasn’t enough of either. They had to understand their hardware at a level that most modern developers never need to approach. They counted clock cycles. They exploited undocumented chip behaviors. They turned timing quirks into features.

The resulting code was often extraordinary in its efficiency. Rick Maurer’s Space Invaders for the Atari 2600 — a one-person port of the arcade game — is a masterpiece of cycle-counting and memory management. The demoscene (programmers who create visual effects as an art form) still releases new Atari 2600 and Commodore 64 demos today, decades after the hardware was discontinued, still finding headroom that wasn’t supposed to exist.

The techniques those developers pioneered influenced how game engines were built for years afterward. Tile-based rendering, sprite atlases, level streaming — these concepts came from programmers who had to solve real problems with almost nothing.

The Thread That Connects Them

Here’s what I find remarkable about all of this: the Atari 2600 programmer counting scan line cycles, the Space Invaders developer whose loop speed became a game mechanic, the Pac-Man team who designed a maze to channel simple AI into complex behavior, the Super Mario Bros. engineer streaming level data column by column — they were all, in different ways, doing the same thing.

They were finding the thing the hardware could do cheaply, and building around it.

Not fighting the constraints. Not wishing for more memory or a faster chip. Working with what existed, understanding it deeply, and finding the elegance inside the limitation.

That instinct — finding the efficient solution rather than the powerful one — is still worth something. Maybe especially now, when hardware has become so fast that we rarely have to think about it.

If you want to go deeper, look up the book “Racing the Beam” by Nick Montfort and Ian Bogost — it’s a close reading of six Atari 2600 games that treats each one as a piece of software engineering as much as game design. There’s also the brilliant series of “Architecture of Consoles” articles by Rodrigo Copetti, which walks through exactly how each classic console worked under the hood.