In 1950, Claude Shannon sat down and wrote a complete blueprint for a chess-playing computer. He described how it would evaluate positions, search through possible moves, and decide on the best play — step by step, in precise detail.

There was just one problem: no computer existed that could run it.

Shannon published his paper anyway. And in doing so, he sketched out nearly every idea that still sits at the heart of chess engines today, including the one powering your favorite chess app.

The Man Who Invented Information

To understand why this matters, you need to know who Shannon was.

In 1948, two years before the chess paper, Shannon published “A Mathematical Theory of Communication” at Bell Labs. It’s one of the most consequential scientific papers of the twentieth century. In it, he essentially invented the concept of information as something that could be measured, compressed, and transmitted reliably. Every digital message you’ve ever sent — every email, every photo, every streaming video — exists because of ideas Shannon worked out in that paper.

He was also, by all accounts, a wonderfully strange person. He unicycled through the hallways of Bell Labs at night. He juggled while riding the unicycle. He built a flame-throwing trumpet. He once constructed a robotic mouse named Theseus that could learn to navigate a maze. Shannon was a serious scientist who thought serious work should also be fun.

So when he turned his attention to chess, it wasn’t a detour. It was vintage Shannon: take an impossibly hard problem, strip it down to its mathematical skeleton, and see what’s actually there.

The Problem With Chess

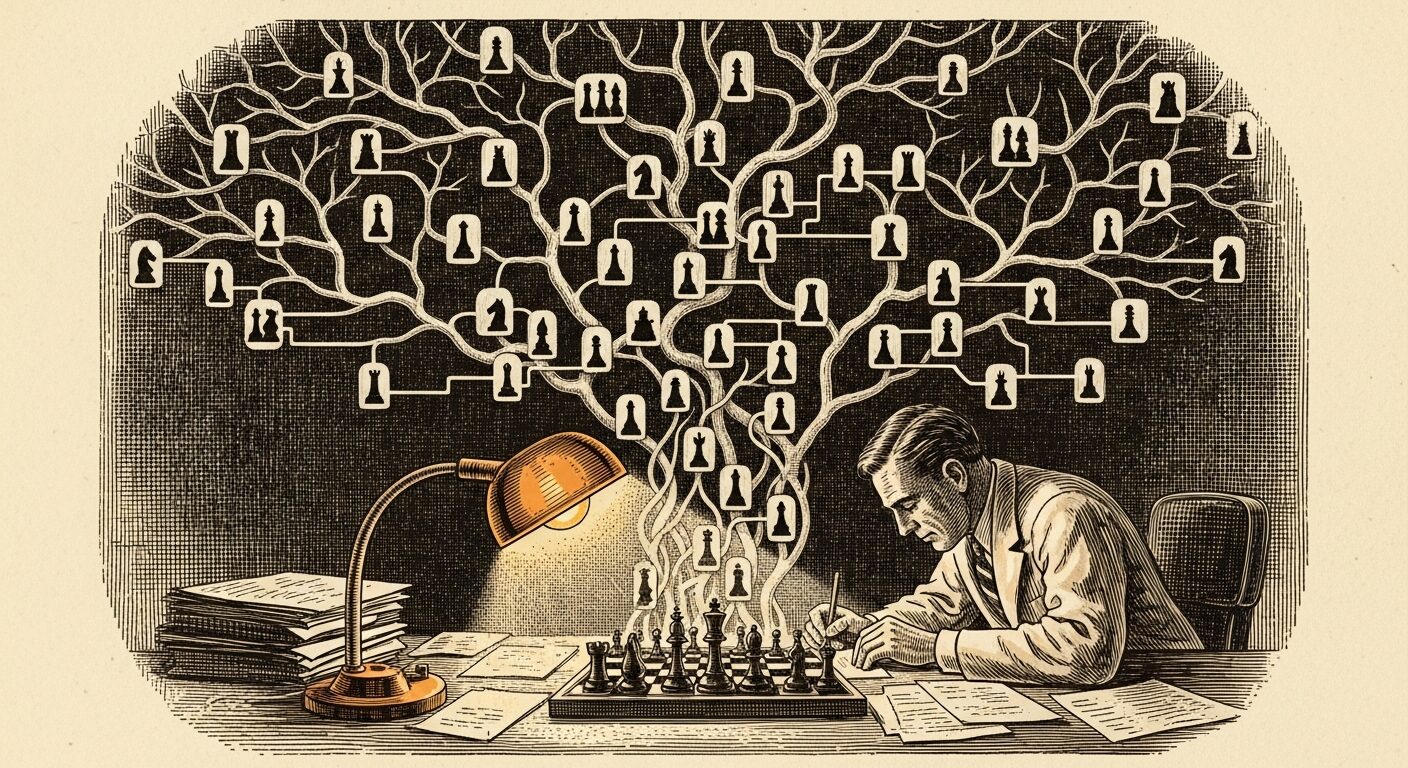

Chess looks like a thinking game, but to a computer, it looks like a tree.

Every position on the board has a set of legal moves — typically around 30. Each of those moves leads to a new position, which has another 30 or so legal moves, which leads to another layer, and so on. By the time you’re looking just four moves ahead — two moves for each player — you’re dealing with roughly 30⁴ = 810,000 positions. Look eight moves ahead and you’re into the billions.

Shannon calculated that the total number of possible chess games — not positions, games, from start to finish — is something like 10¹²⁰. That number has a name now: the Shannon Number. For comparison, the estimated number of atoms in the visible universe is around 10⁸⁰. Chess is, mathematically speaking, incomprehensibly vast.

No computer could search the whole tree. No computer ever will. So the real question Shannon was asking was: how do you play good chess without looking at everything?

Two Strategies for an Impossible Problem

Shannon’s 1950 paper, “Programming a Computer for Playing Chess,” laid out two approaches to this problem. He called them Type A and Type B — and the distinction between them is still debated by chess engine designers today.

Type A is the brute-force approach. Look at every possible move, to a fixed depth, and evaluate all the resulting positions. It’s exhaustive, fair, and completely impractical at any meaningful depth. Shannon recognized this himself — Type A was more of a theoretical baseline than a real proposal.

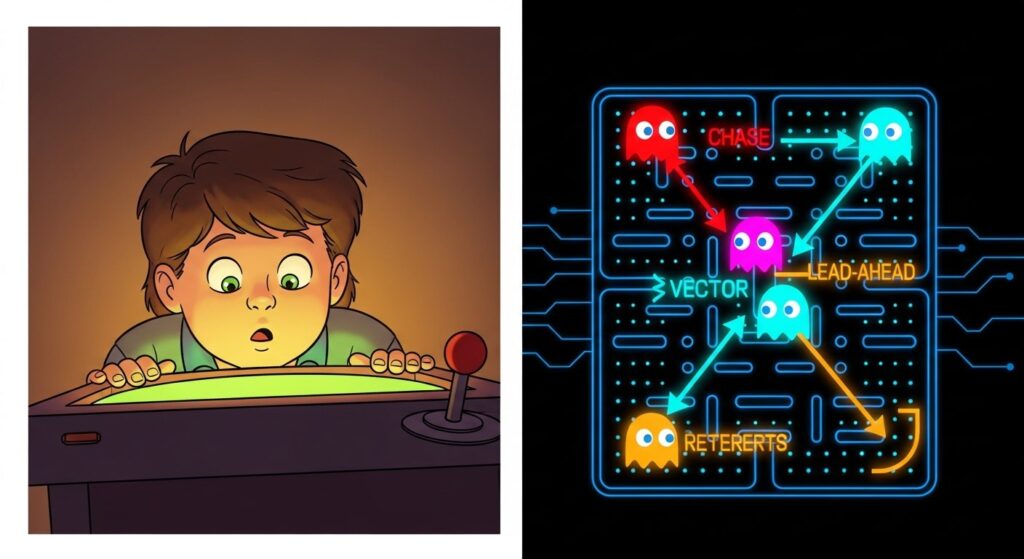

Type B is the interesting one. Instead of looking at everything, you look at the moves that actually matter — the captures, the threats, the pieces that are under attack. You go deeper on those lines and ignore the moves that are obviously unimportant. This is closer to how a human chess player actually thinks: not “what are all 30 of my moves?” but “what are the three moves worth thinking about?”

Type B is harder to build, because it requires the computer to have some sense of what “important” means. But it produces better chess for the computing power available. Shannon was right about this in 1950, and chess programmers have been wrestling with the same tradeoff ever since.

Scoring a Position

The other half of Shannon’s framework was the evaluation function — a formula for scoring any chess position so the computer can decide which outcomes are good and which are bad.

His example evaluation was elegantly simple. You count up the material on the board: pawns are worth 1 point, knights and bishops 3, rooks 5, queens 9. Subtract Black’s total from White’s total, and you have a rough measure of who’s winning. Then you add adjustments for positional factors: is the king safe? Are your pieces active? Do your pawns form a coherent structure or a mess of isolated islands?

The specific numbers Shannon suggested — 1, 3, 3, 5, 9 — are still the standard values used in nearly every chess engine ever written. Pick up a chess textbook today and you’ll find the same figures. That’s not a coincidence. Shannon worked them out in 1950 and got them essentially right.

A Paper No Computer Could Run

Here’s what makes Shannon’s paper remarkable: he published it without being able to test it.

The computers that existed in 1950 were enormous, expensive, one-of-a-kind machines — the kind that filled entire rooms and were booked solid with military and scientific calculations. Nobody was going to hand one over to play chess. Shannon’s program was, in a very literal sense, theoretical. A design without a machine to run it on.

His friend and contemporary Alan Turing had a similar experience. Turing developed his own chess program, called Turochamp, around the same time. Since no computer could run it, Turing ran it himself — looking up the rules of his own algorithm, move by move, spending more than half an hour on each turn. He played through a game manually, acting as a human CPU. Turochamp lost.

Shannon’s program was never hand-simulated. It sat in a journal and waited.

The wait wasn’t long. In November 1951, a programmer named Dietrich Prinz — inspired by Turing’s ideas — wrote the first actual running chess program for the Ferranti Mark 1 computer in Manchester. It could only solve chess endgame puzzles, not play a full game. But it ran. The machines had finally caught up to the ideas.

What Shannon Actually Built

The chess paper wasn’t Shannon’s only contribution to early computer chess. He also wrote a shorter, more accessible version for Scientific American in 1950 — “A Chess-Playing Machine” — aimed at curious readers who wanted to understand the concept without the mathematics.

And he built things. Shannon and his wife Betty constructed a physical chess-playing machine — a device with actual hardware, relays, and circuits — that could handle simplified endgame positions. It wasn’t powerful enough for a real game, but it worked. Shannon was always a builder as well as a theorist.

The chess work was, in many ways, a warm-up for later ideas in artificial intelligence. The question of how a computer could make good decisions in a complex environment — not by brute force, but by reasoning selectively — would drive computer science for decades to come. Shannon had framed that question clearly in 1950. Everyone else just had to answer it.

The Blueprint That Lasted

If you’ve read through the harlepengren.com series on building a chess engine in Python — the PyMinMaximus series — some of this should sound familiar. The minimax algorithm, the evaluation function, the idea of searching ahead and scoring the resulting positions: that’s all Shannon. The concepts he described in a 1950 journal article are still the foundation you build on today.

Modern chess engines have layered enormous sophistication on top of that foundation — alpha-beta pruning, neural network evaluation, opening books, endgame tablebases. But strip all of that away and you’re back to Shannon’s original questions: how do you score a position, and how deep do you look?

He asked those questions before any computer could answer them. The fact that we’re still working with his framework seventy-five years later says everything about how well he’d understood the problem.

A Curiosity to Pursue

Definitely check out Shannon’s original 1950 paper, “Programming a Computer for Playing Chess”. It’s dense in places but surprisingly readable, and the ideas are clearer than you might expect from a 75-year-old technical document.

Or try this: find a chess engine’s source code — Stockfish is open source — and look at its evaluation function. Then look at Shannon’s. The distance between 1950 and today is much shorter than you’d think.